- Category

- >Big Data

Why does Big Data Analytics in trends? Advantages, Application, and Challenges in Big Data

- Richa Grover

- Sep 18, 2019

This blog covers the fundamental terms of Big data, realistic examples assuming technology, importance, types, and characteristics. In addition to this, the blog will also cover applications, working methodology, and emerging technologies. Big data is the data with huge size, get utilized for the collection of the massive size of data which increases exponentially with time.

Topics Covered

- How to Define Big Data?

- Types of Big Data

- Importance of Big Data

- Challenges of Big Data

- Applications of Big Data

- Working Mechanisms for Big Data

How to Define Big Data?

The concept of big data arrives due to the immense increase in the volume of data. Due to this, structuring and sorting of the data become difficult. ‘Big data’ term is ordinarily describing the mixed dataset which is huge in size and is a fusion of both structured and unstructured data. It is a procedure that involves collecting, evaluating and preparing this large data collected from varied sources. Examples of Big Data are Facebook, Exchange-stock market, search engines like Google, data produced from airways.

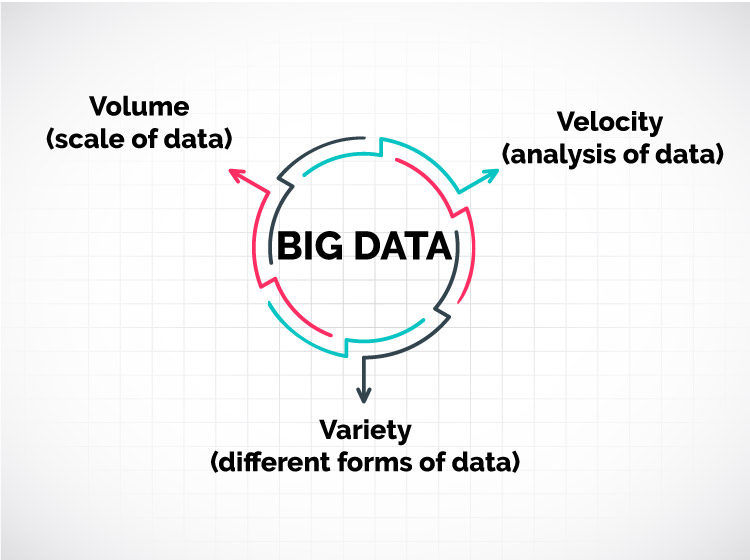

Normally, the Big Data characteristics are explained through 3V’s, i.e. Volume, Velocity, and Variety. Volume is the main distinguishing element for classification the Big Data. All the leading social media websites are receiving a humongous quantity of data on a regular basis, in terabytes/kilobytes. It becomes really difficult to supervise such data through conventional methods. Certain data are collected in files, records, and tables. The second is Velocity. It is the rate at which the data is received and being processed. Usually, around 2.5 quintillion bytes of data are received daily. So, it is impossible to act through traditional methods. The third one is the variety. It refers to the unique sources from where the data is collected. Variety may change from the structure to the category of the data. Text, video, machine-generated images are some of the types of different categories. The other popular characteristics are veracity, value, and variability.

Types of Big Data

Big Data is regularly classified into three different categories: structured, semi-structured and unstructured.

- Structured: It is disclaimed that any data that can be readily approachable, stored and being treated is characterized as structured data. In this, the format of the stored data is known in advance. Examples of such data are the values of a particular table saved in a database.

- Unstructured: Any data whose source is uncertain and unformatted is following unstructured data. Here, data proceeds from independent sources, a combination of text, video and audio records. An example that consists of data is all the search results appeared from a search engine portal.

- Semi-structured: It is an aggregation of both structured and unstructured data. This is a defined data but not stored in any relational database system. A kind of data saved in an XML file is a genuine model of semi-structured data.

Importance of Big data

Various benefits of processing big data are described as:

- Improvement in customer services.

- Better efficiency in operations.

- Utilizing outside knowledge during the decision-making process.

- Initial risk identification under the assistance of services and products.

- Detecting defects, errors, and scams in the organization.

- Better insight into sale-services.

- Cost reductions and savings.

- Increased revenue and agility.

Challenges of Big Data

- Data storage: Due to the rapid increase in the size of the data in short periods of time, the central difficulty is data storage and arranging.

- Data refining: This is the most tedious task and the biggest challenge of the complete process. Cleaning such a vast amount of data is a hectic task. Curating and making it understandable and of proper use is really significant to make it relevant.

- Keeping up with the pace: Technology of Big data is upgrading with time, a few years ago, Apache Hadoop was catching everyone’s eyeballs, then Apache stark and now a combination of both is in the market.

- Cybersecurity risks: With large data, comes an additional risk of the security breach as well. Companies with such large data are becoming the target for cybercrimes.

Applications of Big Data

Big data technology has become an integral part of the complete business cycle and has a diverse range of applications.

- Weather forecasting: Various applications on mobile devices are being used to forecast the weather. This forecasting can be more accurate with the usage of barometers, ambient thermometer, and hygrometers. Various applications under this are: studying the effects of global warming, preparing for the measures in terms of crisis.

- Advertising: Nowadays marketing trend has changed, and prices are no longer get impacted on the basis of reactions. Many data points, surveys, traffic patterns, shopping habits, eye movement patterns, movie selections all these points are utilized to determine what kind of advertisements people are interested in.

- Personal grooming: Big Data is being utilized to optimize personal health and development. Fit bit bands, sleep monitoring, calorie calculations, workout monitoring, all these activities help in developing an insight into personal development.

- Education: Education history consists of huge data related to students, faculty, courses. A proper analysis is required to provide relevant analysis to improve the effectiveness and performance of the institutes. Some of the educational fields where big data can play a relevant role are grading mechanisms, career predictions, structuring the course materials, dynamic learning programs. It has also opened the doors for diverse courses for e-learning.

- Health industry: This is another area that produces a huge pile of data. If a proper analysis is done utilizing this data, the chances of unnecessary diagnosis can reduce big time. Medicines are provided on the basis of proper research and past result analysis. Many health bands are also launched in the market making the user more aware of their health.

- Transportation: Some of the areas in transportation where big data can be utilized are determining the safety levels of the traffic, traffic control & congestion management, route planning. All the real-time routes along with the traffic considerations that taxis are utilizing are managed through big data. All these factors have positive impacts like pollution control, a timesaving, increment is safety control measures.

- Banking: This is another sector where the size of data is increasing at a rapid rate. Uses of big data in the banking sector are mitigation of risk factors, clarity in business, misuse of debit/credit cards, money laundering.

Working Mechanisms for Big Data

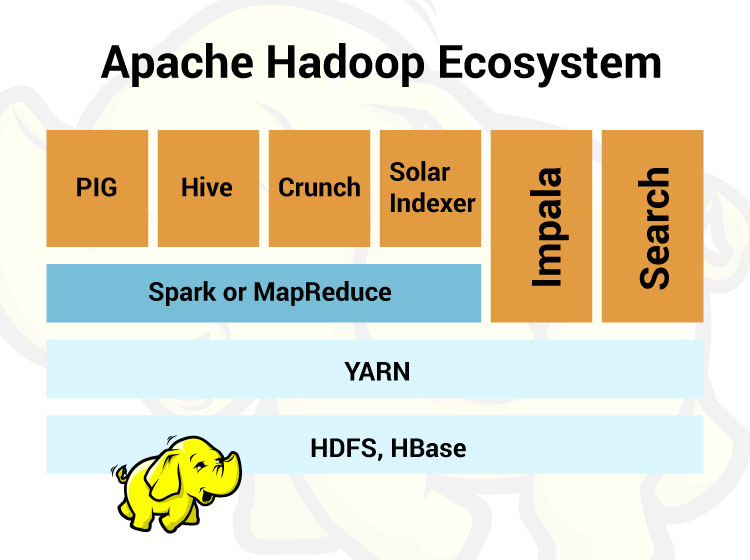

In order to manage the data effectively, many proposals of architectures were made. One amongst them was Hadoop. Currently, it is one of the best open-source tools available under the Apache license to successfully process the large piles of data.

Hadoop consists of two main components HDFS (Hadoop distributed file system) and MapReduce Engine.

The Hadoop ecosystem comprises of three main components:

- Hadoop Common: Apache foundation has certain predefined libraries that can be utilized by other components within the ecosystem.

- Hadoop Distributed File System (HDFS): This is a default data storage. It stores all the chunks of data in an effective manner until the user needs to process that data for analysis. It works on the concept of data replication across different clusters for reliable and easy data access. For this, it utilizes three main components: Name node, Data node, and secondary name node. It works on the Master-slave principle where the Name node works as a master for monitoring the storage cluster and the Data node operates as a slave node.

- MapReduce: This is where all the piles of data get processed. MapReduce breaks down the large dataset into smaller ones to work on them efficiently. The task of analyzing works parallelly with data processing. The basic work mechanism behind this, the “Map” method sends a task for processing to different nodes in the Hadoop cluster and the “Reduce” method has to combine all the results to display it as a single value.

Data access components

- Pig: It is a tool used for analyzing large data sets. Their structure is customizable to include parallelization that makes it more efficient while working with huge data. Another important feature of this is that it can mask critical information during processing.

- Hive: It is a kind of data warehouse that is constructed over the Hadoop. It utilizes a HiveQL language for processing and querying the data. This language is similar to SQL but faster as it queries through indexing.

Conclusion

I hope this blog is quite informative in terms of an introduction to big data, its working and application along with difficulties as challenges in big data. It is the need of the hour. Due to the constant increase in data quantity, its processing is becoming a hideous task. Applications of big data are in weather broadcasting, transportation services, banking, health industry. Hadoop is one of the famous inventions to overcome the cons of directing big data. For more blogs keep exploring and reading Analytics Steps.

Trending blogs

5 Factors Influencing Consumer Behavior

READ MOREElasticity of Demand and its Types

READ MOREAn Overview of Descriptive Analysis

READ MOREWhat is PESTLE Analysis? Everything you need to know about it

READ MOREWhat is Managerial Economics? Definition, Types, Nature, Principles, and Scope

READ MORE5 Factors Affecting the Price Elasticity of Demand (PED)

READ MORE6 Major Branches of Artificial Intelligence (AI)

READ MOREScope of Managerial Economics

READ MOREDifferent Types of Research Methods

READ MOREDijkstra’s Algorithm: The Shortest Path Algorithm

READ MORE

Latest Comments

dataanalyticscourse360digitmg

Sep 24, 2020I think I have never watched such online diaries ever that has absolute things with all nuances which I need. So thoughtfully update this ever for us. <a rel="nofollow" href="https://360digitmg.com/course/professional-certification-in-big-data-analytics">big data in malaysia</a>

magretpaul6

Jun 14, 2022I recently recovered back about 145k worth of Usdt from greedy and scam broker with the help of Mr Koven Gray a binary recovery specialist, I am very happy reaching out to him for help, he gave me some words of encouragement and told me not to worry, few weeks later I was very surprise of getting my lost fund in my account after losing all hope, he is really a blessing to this generation, and this is why I’m going to recommend him to everyone out there ready to recover back their lost of stolen asset in binary option trade. Contact him now via email at kovengray64@gmail.com or WhatsApp +1 218 296 6064.

deekshithakataram1a40193b69d1483b

Jul 22, 2023A detailed discussion of the differences between the two languages makes it clear which one is easier to learn.<a href="https://360digitmg.com/malaysia/data-engineering-course-in-malaysia"data engineer course</a>

deekshithakataram1a40193b69d1483b

Jul 22, 2023A detailed discussion of the differences between the two languages makes it clear which one is easier to learn.<a href="https://360digitmg.com/malaysia/data-engineering-course-in-malaysia"data engineer course</a>