- Category

- >Artificial Intelligence

History of Artificial Intelligence

- Mallika Rangaiah

- Mar 08, 2021

From AI in healthcare, AI in education, AI in manufacturing, AI in law, finance, and even AI in politics, in the prevailing times, AI is everywhere. Yet considering AI to be a solely modern phenomenon would be a misguided notion.

Interestingly, the concept of AI had been steadily building in the minds of our predecessors for quite some time with classical philosophers construing human thinking to be more of a symbolic system. Robots had been a popular myth among the ancient Greeks while the initial records of automata can be traced back to ancient Greece and China.

Speaking of myths, you can spare a glance at our blog on Myths of AI.

Did you know that it was actually back in 1956 when the term artificial intelligence was first conceived by John McCarthy, at a Dartmouth College conference?

From the 1950s onward, various scientists, programmers, and theorists helped cement the modern idea of AI with consistent advancements and innovations emerging in the field making the field a possible reality from being a mere figment of our imagination.

In this blog, we will discuss some of the interesting incidents which have contributed to the history and evolution of AI.

Timeline of Artificial Intelligence

You might assume that the journey of AI actually began in 1956 when the term AI was first conceived by John McCarthy but the tale of the evolution of AI actually kick-started quite some time prior to this date.

AI in 250 BC

That’s right, it all began long back in 250 BC when Ctesibius, a renowned Greek inventor and mathematician, created the world’s first artificial automatic self-regulatory system. Given the name of clepsydra or “water thieve”, the system was developed to ensure that the container used in the water clocks (clocks created to denote the passing of time) remained full.

AI in 380 BC - late 1600s

During this period, many theologists, mathematicians, and philosophers published materials that mulled over mechanical techniques, and numeral systems ultimately giving root to the notion of mechanized “human” thinking in non-human objects. For instance, the Catalan poet and theologian Ramon Llull published Ars generalis ultima (The Ultimate General Art), fine-tuning his approach of adopting paper-based mechanical means to develop fresh knowledge through combinations of concepts.

AI in Early 1700s

Jonathan Swift’s novel “Gulliver’s Travels” specified a device termed as the engine, one of the most initial references to modern-day technology, in particular, a computer. The primary aim of the project was to enhance knowledge and mechanical operations until even the least talented human would appear skilled through the knowledge and aid of a nonhuman mind which simulates artificial intelligence.

AI in 1872

In the year 1872, the author Samuel Butler published his novel “Erewhon” which mulled over the concept that at a certain point in the future, machines would have the scope of possessing consciousness.

AI from 1900-1950

As the 1900s emerged, there was a massive upheaval in the rate at which advancements in AI grew. The history of AI incidents in the 1900s is an interesting one.

1921: A science fiction play named “Rossum’s Universal Robots” was released by the Czech playwright Karel Čapek. The play highlighted the idea of factory-made artificial people whom he named robots. This is the first reference to the term which is known. After this development, many people began adopting the robot concept and applying it in their art and research.

1927: In this year the sci-fi film Metropolis (directed by Fritz Lang) was released. This film is well known for being the first on-screen portrayal of a robot, sparking inspiration for other renowned non-human characters in the future.

1929: Gakutensoku was created, which was the first robot developed in Japan by the Japanese biologist and professor Makoto Nishimura. This term literally implies “learning from the laws of nature,” which means that the robot’s artificially intelligent mind was able to garner knowledge through nature and people.

1939: The programmable digital computer, Atanasoff Berry Computer (ABC) was developed at the Iowa State University by the inventor and physicist John Vincent Atanasoff with Clifford Berry, his graduate student assistant. The computer weighed more than 700 pounds and was capable of solving up to 29 simultaneous linear equations.

1949: In this year, the book “Giant Brains: Or Machines That Think” was issued by the mathematician and actuary Edmund Berkeley. The book highlighted machines becoming more and more adept in effectively handling large sections of information. The book also compared the ability of machines with the human mind, concluding that machines can actually think.

AI in the 1950s

The 1950s was a decade where research-based findings based on AI escalated, leading to a series of advances in the field.

1950: “Programming a Computer for Playing Chess”, the first article on creating a chess-playing computer program was published by Claude Shannon, who was known as the “father of information theory”.

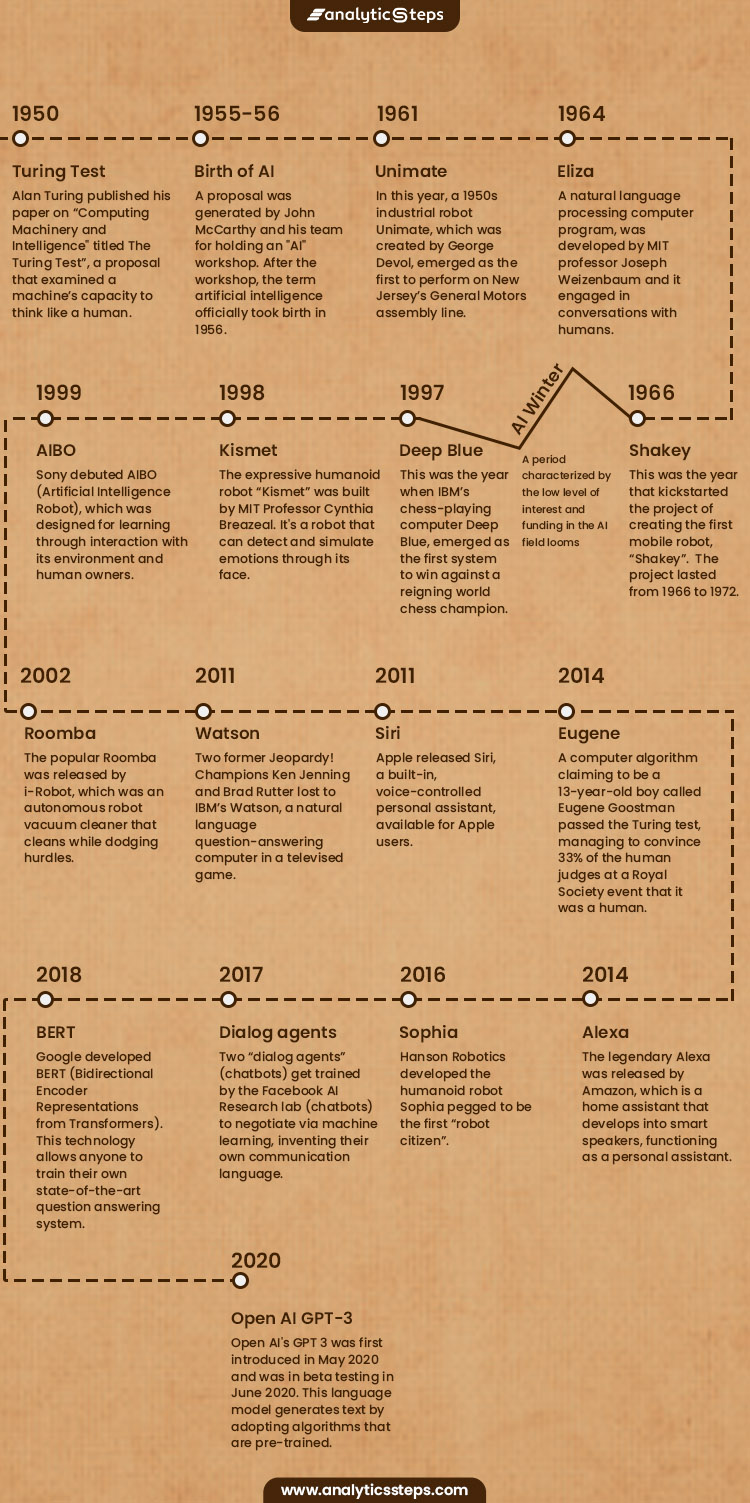

1950: One of the turning points in the history of AI was Alan Turing publishing his paper on “Computing Machinery and Intelligence,” this year. The paper explored the idea of “The Imitation Game” which was later renamed as “The Turing Test”, a proposal that examined a machine’s capacity to think like a human. This test became an integral element in the field of artificial intelligence.

You can also check out our blog on AI vs human intelligence

1952: A program for playing checkers was created by Arthur Samuel. This was the first game-playing program, which had the ability to compete against human players in the game of Checkers.

1955: The first AI computer program - Logic Theorist was written this year by Allen Newell (researcher), Herbert Simon (economist), and Cliff Shaw (programmer) which ultimately proved 38 of the first 52 theorems in Whitehead and Russell's Principia Mathematica.

1955: A proposal was generated by John McCarthy, an American computer scientist, and his team for holding an “artificial intelligence” workshop.

1956: After the workshop, the term artificial intelligence officially took birth, courtesy of John McCarthy, at a Dartmouth College Conference.

1958: Lisp, a high-level programming language for AI research that still prevails in the present scenario was developed by McCarthy at MIT.

1959: The term “machine learning” came into existence by Arthur Samuel as he worked towards programming a computer for playing a chess game that could compete against human players.

Timeline of AI

AI in the 1960s

This decade was characterized by the rise in the development of automatons and robots, fresh programming languages, research, and films depicting artificially intelligent beings.

1961: In this year, a 1950s industrial robot called Unimate, which was created by George Devol, emerged as the first to perform on New Jersey’s General Motors assembly line. The robot’s duties incorporated tasks that would be perceived as risky to be performed by humans such as transporting die castings from the assembly line.

1961: SAINT (Symbolic Automatic Integrator) was developed by James Slagle, the computer scientist, and professor. This is a program that solves symbolic integration issues in freshman calculus

1964: An early artificial intelligence program termed STUDENT was written in Lisp by Daniel G. Bobrow, an American computer scientist. The program was developed for reading and solving algebra word problems.

1966: Ironically, the first chatbot Eliza, a natural language processing computer program, developed by MIT professor Joseph Weizenbaum, had actually been fashioned to serve as a parody rather than something people could connect with. Yet people started displaying actual emotional connections with it. Although Eliza interacted through text and was incapable of learning through human interactions, she played an integral role in tearing down the communication barriers lying between humans and machines.

1966: This was the year that kickstarted the project of creating the first mobile robot, “Shakey”. The project lasted from 1966 to 1972. The project was perceived as an approach for linking the different AI fields with navigation and computer vision. The robot presently resides in the Computer History Museum.

1968: This was the year when the popular sci-fi film 2001: A Space Odyssey was released. Directed by filmmaker Stanley Kubrick, the film featured HAL (Heuristically programmed Algorithmic computer), an AI-powered nuclear-powered Discovery One spaceship. The onboard supercomputer bore a certain resemblance to our present modern voice assistants like Siri and Alexa.

“That may be one of the movie’s greatest achievements: it placed AI into the mainstream consciousness even before the first AI robot, Shakey, was completed in 1969,”

- Murphy, professor at Texas A&M University

1968: SHRDLU, an early natural language computer program was created by Terry Winograd, a computer science professor at Stanford University.

AI in the 1970s

This decade dealt with advancements in relation to automatons and robots. Yet at the same time, it was also characterized by various challenges like the government scaling down its support for research in the AI field.

1970: This was the year when the first anthropomorphic robot called WABOT-1 was developed in Japan at Waseda University. Moveable limbs and observing and conversing ability were some of the features it incorporated.

Check out our blog on Conversational AI.

1973: Progress in AI went downhill at this point when James Lighthill an applied mathematician announced the condition of the AI research to the British Science Council claiming that as of yet none of the discoveries made have generated the expected impact. This resulted in the British government considerably cutting down their support for AI research.

1977: The iconic legacy of Star Wars kick-started this year. Directed by George Lucas, the film features C-3PO, a humanoid robot who was developed to serve as a protocol droid and was portrayed to be proficient in over seven million forms of communication, and R2-D2 which was a small, astromech droid that is unfit for human speech and interacts through electronic beeps.

1979: The Stanford Cart, a remotely controlled TV-equipped mobile robot was created back in 1961, successfully crosses a room filled with chairs in the absence of human interference, in a period of around 5 hours, which makes it one of the most initial autonomous vehicle examples.

AI in the 1980s

AI Winter (a period characterized by the low level of interest and funding in the AI field) looms in a certain portion of this decade, which was later revived as the British government resumed its funding as an endeavor to compete with Japanese efforts.

1980: The development of WABOT-2 took place at Waseda University, enabling the humanoid to interact with people, read musical scores and play music on an electronic organ.

1981: Around $850 million were allotted to the Fifth Generation Computer project on behalf of the Japanese Ministry of International Trade and Industry, the project’s aim is to develop computers that could execute interactions, translate languages, interpret pictures, and analyze like human beings.

1984: Electric Dreams, a film directed by Steve Barron, was released, an interesting tale surrounding the love triangle between a man, woman, and a personal computer called “Edgar”.

1984: The warning of the looming AI Winter was brought up at an Association for the Advancement of Artificial Intelligence (AAAI), by Roger Schank and Marvin Minsky. This warning proved accurate within the next 3 years.

1986: This was the year when the first driverless car, a Mercedes-Benz van, armed with sensors and cameras which was built under the guidance of Ernst Dickmanns drove up to 55 mph on empty streets.

1988: Judea Pearl, a computer scientist, published “Probabilistic Reasoning in Intelligent Systems'' in this year. He was also given the credit for inventing Bayesian networks, a mathematical formalism for defining complex probability models, and the primary algorithms adopted for inference in these models.

1988: Rollo Carpenter, a programmer built Jabberwacky with the aim of simulating natural human chat in an engaging fashion. This was one of the early approaches towards generating AI via human interaction.

AI in the 1990s

The end of the millennium is characterized by a range of advancements that gradually revolutionized the AI field.

1993: The book “Elephants don’t play Chess'' was published by Rodney Brooks. The book proposed a fresh approach for AI, in developing intelligent systems from scratch and based on ongoing physical interaction with the environment.

1995: A.L.I.C.E (Artificial Linguistic Internet Computer Entity), was built by Richard Wallace, a computer scientist, which was inspired by Weizenbaum's ELIZA. The addition of natural language sample data collection in A.L.I.C.E differentiated the two.

1997: Jürgen Schmidhuber and Sepp Hochreiter proposed Long Short-Term Memory (LSTM), a type of recurrent neural network (RNN) architecture that is presently adopted for speech and handwriting recognition.

1997: This was the year when IBM’s chess-playing computer Deep Blue, emerged as the first system to win against a reigning world chess champion.

1998: Furby, the first domestic or pet toy robot was invented by Dave Hampton and Caleb Chung.

1998: The expressive humanoid robot “Kismet” was built by MIT Professor Cynthia Breazeal. It's a robot that can detect and simulate emotions through its face. The robot was structured like a human face being equipped with eyes, lips, eyelids, and eyebrows.

1999: Following the footsteps of Furby, Sony debuted AIBO (Artificial Intelligence Robot), which was designed for learning through interaction with its environment and human owners. The robot had the capacity of understanding and responding to over 100 voice commands.

AI from 2000-2010

Although the millennium set aboard with the panic caused by the Y2K bug in the very beginning of 2000, upon its resolution the trend of advancements in the world of AI surged like a phoenix.

2000: An artificially intelligent humanoid robot, ASIMO was released by Honda. The robot is capable of walking as fast as humans and delivering trays to customers in restaurants.

2001: Steven Spielberg’s sci-fi film A.I. Artificial Intelligence was released this year. The movie revolved around David, a childlike android who was exclusively programmed with the ability to love.

2002: The popular Roomba was released by i-Robot, which was an autonomous robot vacuum cleaner that cleans while dodging hurdles.

2004: This was the year when robotic exploration rovers of NASA namely Spirit and Opportunity navigated the surface of Mars in the absence of human intervention.

2004: I, Robot, a film set in the year 2035 in which humanoid robots served humankind, is released, which was directed by the Australian director Alex Proyas.

2006: The term "machine reading" was coined by Oren Etzioni, Michele Banko, and Michael Cafarella, defining it as unsupervised autonomous text comprehension.

2007: ImageNet, a big database containing annotated images was assembled by Fei Fei Li, a computer science professor, and her colleagues. The database aimed to help in object recognition software research.

2009: A driverless car began secretly getting built by Google. By the year 2014, the car managed to pass Nevada’s self-driving test.

A brief highlight of Present AI

From this point onward, AI in our daily lives has fully ingrained itself in all our day-to-day tasks. Dominating every corner of our existence, we have reached a point where we might even start taking this technology for granted. We have listed a couple of the highlights in this field that occurred in the recent decade.

2010: Microsoft released Kinect for Xbox 360, which was the first gaming device tracking human body movement through a 3D camera and infrared detection.

2011: Two former Jeopardy! Champions Ken Jenning and Brad Rutter lost to IBM’s Watson, a natural language question-answering computer in a televised game.

2011: Apple released Siri, a built-in, voice-controlled personal assistant, available for Apple users. This voice assistant adopts a natural-language user interface for comprehending, observing, responding, and suggesting things to human users by adapting to voice commands.

2012: A massive neural network equipped with 16,000 processors was trained by Google researchers Jeff Dean and Andrew Ng to detect cat images without any background information, by presenting it with 10 million unlabeled images found in YouTube videos.

2013: A computer program called Never Ending Image Learner (NEIL), housed at Carnegie Mellon University, was released this year. The program works 24/7 learning information about images which it discovers on the internet.

2014: Cortana, a virtual assistant created by Microsoft was released this year, its version being similar to Apple’s Siri.

2014: A computer algorithm claiming to be a 13-year-old boy called Eugene Goostman passed the Turing test. The program managed to convince 33% of the human judges at a Royal Society event that it was actually a human.

2014: The legendary Alexa was released by Amazon, which is a home assistant that develops into smart speakers, functioning as a personal assistant.

Speaking of Amazon, you can also sneak a peek at our blog on How Amazon uses Big Data

2015: An open letter was signed by Elon Musk, Stephen Hawking, and Steve Wozniak among 3,000 others requesting a ban on the development and adoption of autonomous weapons for war purposes.

2015-2017: AlphaGo, a computer program was developed by Google DeepMind, which beat many world (human) champions in the board game Go.

2016: Hanson Robotics developed the humanoid robot Sophia pegged to be the first “robot citizen”. Distinguishing her from her predecessors was her similarity to an actual human being, with her ability to see, make facial expressions, and communicate via AI.

2016: Google Home was released by Google, a smart speaker which adopts AI for serving as a “personal assistant” to aid users in duties like remembering tasks, creating appointments, and learning information by using voice.

2017: Two “dialog agents” (chatbots) get trained by the Facebook AI Research lab (chatbots) to negotiate via machine learning. Yet as the chatbots interacted they deviated from human language, inventing their own communication language.

2018: Alibaba developed an AI model that scored better than humans in a Stanford University reading and comprehension test. The Alibaba language processing scored 82.44 against 82.30 on a set of 100,000 questions.

2018: Google developed BERT (Bidirectional Encoder Representations from Transformers). This technology allows anyone to train their own state-of-the-art question answering system.

2020 - OpenAI GPT-3, was first introduced in May 2020 and was in beta testing in June 2020. This language model generates text by adopting algorithms that are pre-trained, i.e which have already been fed the data which they require for executing the task.

The traces of what began in 250BC is now a technology dominating every nook and corner of the world. Hopefully, this blog has shed some light on the interesting incidents making up the history of Artificial Intelligence.

Trending blogs

5 Factors Influencing Consumer Behavior

READ MOREElasticity of Demand and its Types

READ MOREAn Overview of Descriptive Analysis

READ MOREWhat is PESTLE Analysis? Everything you need to know about it

READ MOREWhat is Managerial Economics? Definition, Types, Nature, Principles, and Scope

READ MORE5 Factors Affecting the Price Elasticity of Demand (PED)

READ MORE6 Major Branches of Artificial Intelligence (AI)

READ MOREScope of Managerial Economics

READ MOREDifferent Types of Research Methods

READ MOREDijkstra’s Algorithm: The Shortest Path Algorithm

READ MORE

Latest Comments

nehatweets123

Oct 04, 2021Dramatic success in machine learning has led to a surge of Artificial Intelligence (AI) applications. Continued advances promise to produce autonomous systems that will perceive, learn, decide, and act on their own. Learn more about it here. https://bit.ly/3kyhXGu

ibraprastya24303df185341e4856

Apr 12, 2024Thank you in advance admin. For all friends who want to know how to win in slot games, please visit the link below. One of the keys to winning is choosing a place to play, namely GACOR, which has provided many winning checks to players, as well as proof of payments made by the site. https://clarogaming.gg/

ibraprastya24303df185341e4856

Apr 12, 2024Thank you in advance admin. The following are recommendations for places to play that are easy to win without cheating and you can immediately check the proof of winning that has been revealed by the site, remember! Be wise in choosing a place to play slots, good luck winning. https://abkhaziya.net/

ibraprastya24303df185341e4856

Apr 12, 2024Thank you in advance admin. Several recommendations for fake and anti-fraud sites, lots of proof of victory has been provided, please try it and feel it for yourself!! https://foodfunandfotos.com/

ibraprastya24303df185341e4856

Apr 12, 2024Thank you in advance admin. For all friends who are looking for the newest and most interesting tourist destinations, you can visit the link below, we update the newest tours every day, don't miss it. https://travelingaja.com/

Diane Austin

May 22, 2024GET RICH WITH BLANK ATM CARD, Whatsapp: +18033921735 I want to testify about Dark Web blank atm cards which can withdraw money from any atm machines around the world. I was very poor before and have no job. I saw so many testimony about how Dark Web Online Hackers send them the atm blank card and use it to collect money in any atm machine and become rich {DARKWEBONLINEHACKERS@GMAIL.COM} I email them also and they sent me the blank atm card. I have use it to get 500,000 dollars. withdraw the maximum of 5,000 USD daily. Dark Web is giving out the card just to help the poor. Hack and take money directly from any atm machine vault with the use of atm programmed card which runs in automatic mode. You can also contact them for the service below * Western Union/MoneyGram Transfer * Bank Transfer * PayPal / Skrill Transfer * Crypto Mining * CashApp Transfer * Bitcoin Loans * Recover Stolen/Missing Crypto/Funds/Assets Email: darkwebonlinehackers@gmail.com Telegram or WhatsApp: +18033921735 Website: https://darkwebonlinehackers.com