- Category

- >Machine Learning

How to use the Random Forest classifier in Machine learning?

- Rohit Dwivedi

- May 11, 2020

- Updated on: Jan 18, 2021

A big role of machine learning is in classifying tasks that have great treasures in terms of business applications. Like, classifying whether the loan customer will default or not and there are many such applications where classification tasks are done. In machine learning, there are many classification algorithms that include KNN, Logistics Regression, Naive Bayes, Decision tree but Random forest classifier is at the top when it comes to classification tasks.

Random Forest comes from ensemble methods that are combinations of different or the same algorithms that are used in classification tasks. The random forest comes under a supervised algorithm that can be used for both classifications as well as regression tasks. It is the easiest and most used algorithm when it comes to classification tasks.

If you are not familiar with the Decision Tree classifier, I would recommend you initially go through the concept of decision tree here as they are fundamentals that are used in the Random Forest classifier.

Introduction to Random Forest Classifier

In a forest there are many trees, the more the number of trees the more vigorous the forest is. Random forest on randomly selected data creates different decision trees and then makes the collection of votes from trees to compute the class of the test object.

Let's understand the random forest algorithm in layman's terms through an example, consider a case where you have to purchase a mobile phone. You can search on the internet about different mobile phones, you can read users' reviews on different websites or you can decide to ask your friends to recommend a phone. Suppose you have chosen to ask your friends about it. Your friend recommends you a phone and you make a list of all the recommended phones and finally, you make them vote for the best amongst the all recommended phones. The phone with the highest recommendation is the phone you would purchase.

In the above example, there are two scenarios: first is to ask your friends to recommend you a phone. This is similar to the decision tree algorithm and then asking them to vote for all recommended phones. The whole task from asking for recommendations and then asking to vote from all those recommendations is nothing but a Random forest algorithm.

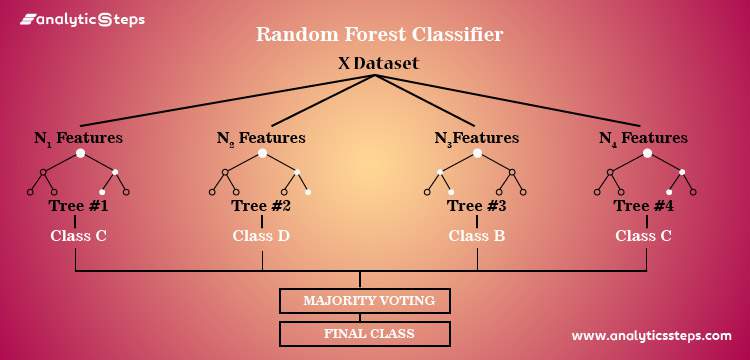

Random forest is based on the divide-and-conquer perspective of decision trees that are created by randomly splitting the data. Generating decision trees is also known as a forest. Each decision tree is formed using feature selection indicators like information gain, gain ratio, and Gini index of each feature. Each tree is dependent on an independent sample. Considering it to be a classification problem, then each tree computes votes and the highest votes class is chosen. If its regression, the average of all the tree's outputs is declared as the result. It is the most powerful algorithm compared to all others.

The only difference that makes random forest algorithms different from decision trees is the computation that is made to find the root node and splitting the attributes nodes will run in a random way.

How does the algorithm work?

-

Pick random samples from the dataset.

-

Generate decision trees for each sample and compute prediction results from each decision tree.

-

For each predicted result calculate votes.

-

Choose the prediction result having maximum votes as the final prediction.

Working diagram of random forest

The basic parameters that are used in random forest algorithms are the total numbers of trees, minimum spilt, spilt criteria, etc. Sklearn package in python offers several different parameters that you can check here.

Why use the Random Forest algorithm?

-

It can be used for both classifications as well as regression tasks.

-

Overfitting problem that is censorious and can make results poor but in case of the random forest the classifier will not overfit if there are enough trees.

-

It can handle missing values.

-

It can be used for categorical values as well.

Random forest Vs Decision Tree

Random forest is nothing but a set of many decision trees. Decision trees are faster. Extensive decision trees might get troubled by overfitting, but random forest prevents that by generating more trees on random subsets. Random forests are complex and not easy to explain but decision trees are easy and can be converted to certain rules.

How can random forests be used to check about feature importance?

The random forest also gives you a good feature that can be used to compute less important and most important features. Sklearn has given you an extra feature with the model that can show you the contribution of each individual feature in prediction. It automatically calculates the appropriate score of independent attributes in the training part. And then it is scaled down so that the sum of all the scores comes out to be 1.

The score will help you to decide the importance of independent features and then you can drop the features that have least importance while building the model.

Random forests make use of Gini importance or MDI (Mean decrease impurity) to compute the importance of each attribute. The amount of total decrease in node impurity is also called Gini importance. This is the method through which accuracy or model fit decreases when there is a drop of the feature. More appropriate the feature is if large is the decrease. Hence, the mean decrease is called the significant parameter of feature selection.

Random Forest applications

There are many different applications where a random forest is used and gives good reliable results that include e-commerce, banking, medicine, etc. A few of the examples are discussed below:

-

In the stock market, a random forest algorithm can be used to check about the stock trends and contemplate loss and profit

-

In banking, the random forest can be used to compute the loyal customers that means which customer will default and which will not. Fraud customers or customers having a bad record with the bank.

-

Calculations of the correct mixture of compounds in medicine or whether identifying any sort of disease using the patient's medical records.

-

The random forest can be used for recommending products in e-commerce.

What are the advantages and disadvantages of the Random forest algorithm?

Advantages:

-

It overcomes the problem of overfitting.

-

It is fast and can deal with missing values data as well.

-

It is flexible and gives high accuracy.

-

Can be used for both classifications as well as regression tasks.

-

Using random forest you can compute the relative feature importance.

-

It can give good accuracy even if the higher volume of data is missing.

Disadvantages:

-

Random forest is a complex algorithm that is not easy to interpret.

-

Complexity is large.

-

Predictions given by random forest takes many times if we compare it to other algorithms

-

Higher computational resources are required to use a random forest algorithm.

Conclusion

The random forest algorithm is one of my favorite algorithms because of the reliable results and high accuracy it gives. In this blog, I have tried to cover the random forest classifier. How it is used, what is the working principle of random forest, what role do decision trees play in a random forest, why you should use the random forest and different applications of random forest. And at last, I have discussed the advantages & disadvantages of using random forest algorithms.

Thanks for reading. I assume that you have learned as much from this blog as I did by writing the blog. Follow Analytics Steps routinely on Facebook, Twitter, and LinkedIn.

Trending blogs

5 Factors Influencing Consumer Behavior

READ MOREElasticity of Demand and its Types

READ MOREAn Overview of Descriptive Analysis

READ MOREWhat is PESTLE Analysis? Everything you need to know about it

READ MOREWhat is Managerial Economics? Definition, Types, Nature, Principles, and Scope

READ MORE5 Factors Affecting the Price Elasticity of Demand (PED)

READ MORE6 Major Branches of Artificial Intelligence (AI)

READ MOREScope of Managerial Economics

READ MOREDifferent Types of Research Methods

READ MOREDijkstra’s Algorithm: The Shortest Path Algorithm

READ MORE

Latest Comments