- Category

- >Machine Learning

What is Support Vector Regression?

- Ayush Singh Rawat

- Jun 21, 2021

Deep neural networks have created a new AI frenzy as well as many intriguing and genuinely valuable AI-based applications. They demonstrate above-average performance on many tasks than counterparts.

Is this, however, to say that we should abandon more traditional machine learning approaches?

The explanation for this is straightforward: they perceive things that deep learning algorithms don't. The mistakes generated by those approaches are frequently different from those produced by deep learning models due to their varied mathematical structure.

This may appear to be a disadvantage, but it is not since the models may be merged. You could even discover that the ensemble performs better as a result of this. This is the outcome of all of the errors cancelling out one another (Source).

In this article, I'll go through the benefits of SVR, how it's derived from support vector machines, and some key concepts.

Understanding Regression

Regression analysis is a statistical procedure that is used to identify the degree and type of a connection between one dependent variable (typically Y) and a set of other factors (known as independent variables).

The goal of most linear regression models is to reduce the sum of squared errors. Take, for example, Ordinary Least Squares (OLS). Ordinary least squares (OLS) is a form of linear least squares approach used in statistics to estimate the unknown parameters in a linear regression model.

Lasso, Ridge, and ElasticNet are all OLS extensions with an extra penalty parameter that seeks to reduce the amount of features utilised in the final model while minimising complexity. However, the purpose is to lower the error of the test set, as with many models.

What if we are just worried about eliminating errors to some extent? And what if we don't worry how big our mistakes are, as long as they are acceptable? For instance, take house pricing. What if we're alright with the prognosis in a particular quantity of currency - say $4,000? Then, when the inaccuracy is within that range, we may offer our model a certain freedom to obtain the forecasts.

The fact that a support vector regression fits is what distinguishes it from other forms of regressions. The regression line or gradient in linear and polynomial regressions follows the path chosen by the user.

A linear regression always follows the equation (y = mx+c), but a polynomial regression follows the equation (y = mx^n + c).

They can't, however, determine the optimum regression equation, which a support vector regression can do in a nutshell. It will choose the best fitting model equation mathematically and apply it to the model.

(Most related blog: 7 types of regression techniques in ML)

What is a Support Vector Machine?

To grasp the concept of support vector regression, you must first embrace the idea of support vector machines. The goal of the support vector machine method is to discover a hyperplane in an n-dimensional space, where n denotes the number of features or independent variables.

Let me give you an example, we're using classification as an example because they're used to classify data in the vast majority of situations.

It's one of the most well-known applications of the supervised Machine Learning methodology. We may call it one of the most powerful models for solving classification issues or classifying data that isn't linearly separable.

(Must catch: Machine learning Models)

I'll use a traditional kitchen example; I'm sure we all enjoy chips? Without a doubt, I do. I had a desire to produce my own chips. I went to the produce store and got potatoes, then went straight to my kitchen. I did nothing more than watch a YouTube video. I started slicing the potatoes in the hot oil, which turned out to be a nightmare, and I ended up with dark brown/black chips.

What went wrong? I bought potatoes, followed the video's instructions, and made sure the oil was at the proper temperature. Is there anything I'm missing? When I performed my study and spoke with experts, I discovered that I had missed the trick: I had picked potatoes with a greater starch content rather than ones with a lower starch level.

All of the potatoes appeared to be the same to me; this is where the specialists' years of training came into play; they had well-trained eyes on how to select potatoes with reduced carbohydrate content. It has several distinguishing characteristics, such as the fact that these potatoes will appear fresh and will have some more skin that can be removed from our fingernails, as well as the fact that they will appear muddy.

I was merely attempting to explain the nonlinear dataset with the chip as an example. While many classifiers exist for linearly separable data, such as logistic regression or linear regression, SVMs can handle extremely non-linear data employing an astonishing approach known as the kernel trick.

The transformation, which rearranges the dataset in such a way that it is linearly solvable, indirectly translates the input vectors to higher dimensional (adds more dimensions) feature spaces.

(Also read: Multiple Linear Regression)

Introduction to Support Vector Regression

A component of support vector machines is support vector regression. In other terms, it may be mentioned that there is a notion known as support vector machine, which can be used to analyse both regression and classification data.

When the support vector machine is used for classification, it is referred to as support vector classification, and when it is used for regression, it is referred to as support vector regression.

(Related blog: Binary and Multiclass classification in ML)

The Support Vector Regression (SVR) uses the same ideas as the SVM for classification, with a few small differences. For starters, because output is a real number, it becomes incredibly difficult to forecast the information at hand, which has an infinite number of possibilities.

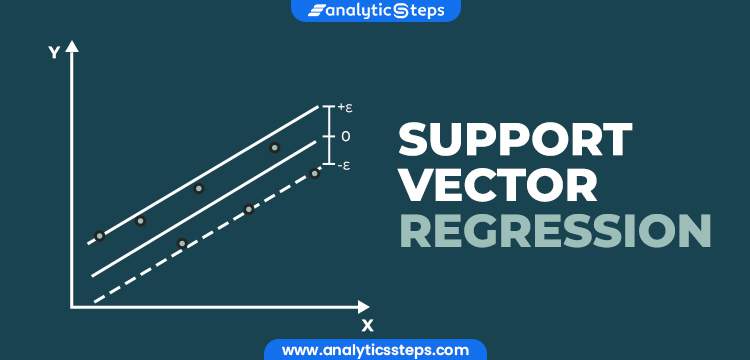

A margin of tolerance (epsilon) is supplied in the case of regression as an approximate estimate to the SVM that the issue would have already requested. Apart from that, there is a more challenging reason: the algorithm is more complex, thus it must be considered. However, the basic idea remains the same: to reduce error by customising the hyperplane to maximise the margin while keeping in mind.

The introduction of a -insensitive zone around the function, known as the -tube, allows SVM to be generalised to SVR. The optimization problem is reformulated in this tube to discover the tube that best approximates the continuous-valued function while balancing model complexity and prediction error.

SVR is defined as an optimization problem by first constructing a convex-insensitive loss function to be reduced and then determining the flattest tube that includes the majority of the training cases. As a result, the loss function and the geometrical parameters of the tube are combined to form a multiobjective function.

Then, using suitable numerical optimization methods, the convex optimization, which has a unique solution, is solved. Support vectors, which are training samples that fall outside the tube's perimeter, are used to represent the hyperplane.

In a supervised-learning environment, the support vectors are the most influential cases that determine the form of the tube, and the training and test data are assumed to be independent and identically distributed (iid), obtained from the same fixed but unknown probability data distribution function.

Important terminologies in Support Vector Regression

Some important terms in SVR

Some important terms that are synonymous with the working of SVR are :

-

Kernel:

The function for converting a lower-dimensional data set to a higher-dimensional data set. A kernel aids in the search for a hyperplane in higher-dimensional space while reducing the computing cost.

When the size of the data grows larger, the computing cost usually rises. When we are unable to identify a separating hyperplane in a particular dimension and must shift to a higher dimension, this increase in dimension is necessary.

-

Hyper Plane:

This is the separating line between the data classes in SVM. Although, in SVR, we will describe it as a line that will assist us in predicting a continuous value or goal value.

-

Boundary line:

Other than Hyper Plane, there are two lines in SVM that produce a margin. The support vectors might be within or outside the boundary lines. The two classes are separated by this line.

The premise is the same in SVR. A decision boundary line can be conceived of as a demarcation line (for simplicity), with positive examples on one side and negative examples on the other.

The instances on this line can be characterised as either good or negative. The same SVM approach will be used in Support Vector Regression as well.

-

Support vectors:

The data points closest to the border are listed here. The distance between the locations is little or negligible. Support vectors are locations that are outside the -tube in SVR. The smaller the value of, the more points outside the tube there are, and hence the more support vectors there are.

(Also read: Different types of learning in ML)

Conclusion

SVR really proves to be better than deep learning methods in cases of limited datasets and also require much less time than its counterpart. In comparison with other regression algorithms, SVR uses much less computation and has high accuracy and credibility.

(Recommended blog: Machine Learning algorithms)

Trending blogs

5 Factors Influencing Consumer Behavior

READ MOREElasticity of Demand and its Types

READ MOREAn Overview of Descriptive Analysis

READ MOREWhat is PESTLE Analysis? Everything you need to know about it

READ MOREWhat is Managerial Economics? Definition, Types, Nature, Principles, and Scope

READ MORE5 Factors Affecting the Price Elasticity of Demand (PED)

READ MORE6 Major Branches of Artificial Intelligence (AI)

READ MOREScope of Managerial Economics

READ MOREDifferent Types of Research Methods

READ MOREDijkstra’s Algorithm: The Shortest Path Algorithm

READ MORE

Latest Comments